React Markdown emerged as popular solution for developers seeking to render Markdown content within React applications. Its simplicity and ease of implementation make it attractive choice for blogs, documentation sites, content management systems. However, questions frequently arise about its performance when dealing with large Markdown files containing extensive text, code blocks, complex formatting.

The challenge of processing large Markdown files in React environments stems from need to parse and render content efficiently without compromising user experience. Developers must consider factors such as parsing speed, rendering performance, memory usage when implementing React Markdown for substantial content volumes. Understanding these limitations and potential solutions becomes crucial for maintaining optimal application performance.

Various strategies and alternative approaches exist to handle large Markdown files effectively in React applications. From implementing virtualization techniques to exploring performance optimizations, developers have multiple options at disposal. This article delves deep into React Markdown capabilities with large files, examining performance considerations, optimization techniques, potential alternatives that might better suit specific use cases.

React Markdown Performance Characteristics

Parsing Efficiency Concerns

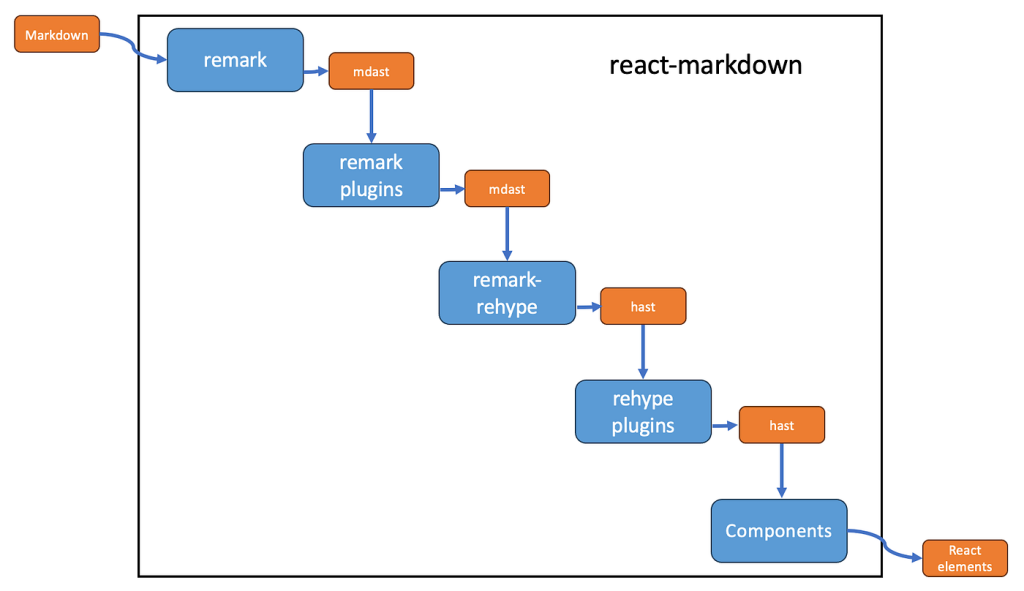

React Markdown processes Markdown files by converting them into React components through series of parsing and transformation steps. The parsing efficiency directly correlates with file size and complexity. Small to medium Markdown files typically process seamlessly, providing users with near-instantaneous rendering. However, as file size increases, parsing time grows proportionally, potentially creating noticeable delays in content display.

The underlying markdown-it library that React Markdown utilizes employs tokenization approach that works efficiently for standard documents but may struggle with extremely large files. Complex nested structures, extensive code blocks, numerous formatting elements require additional processing steps, which can accumulate significantly in larger documents. Developers must recognize these performance characteristics when planning to implement React Markdown for content-intensive applications.

Memory consumption during parsing represents another critical performance aspect. Large Markdown files necessitate greater memory allocation during tokenization and component generation, which can strain browser resources, particularly on devices with limited RAM. This becomes especially problematic when multiple large Markdown files need processing simultaneously or when complex React components wrap the rendered Markdown content.

- Benchmark different Markdown parsing libraries to identify most efficient option for specific content types

- Consider pre-processing large Markdown files into optimized formats before deployment

- Implement incremental loading strategies that process content sections on demand rather than all at once

- Monitor memory consumption during development to identify memory leaks or inefficient parsing patterns

- Test with realistic data volumes during development to catch performance issues before production

Rendering Bottlenecks in React

React’s reconciliation process adds another layer of complexity when rendering large Markdown files. Each parsed Markdown element becomes React component, potentially creating deep component trees that require substantial time for reconciliation during renders. The Virtual DOM diffing algorithm must compare thousands of elements, which can lead to performance bottlenecks, especially on lower-end devices or during state updates that trigger re-renders.

Large Markdown files often contain numerous code blocks, lists, and tables that translate into complex React component structures. Code syntax highlighting, typically implemented through additional libraries like react-syntax-highlighter, further compounds rendering complexity. These highlighting processes occur synchronously during rendering, blocking main thread and potentially causing noticeable UI freezing, particularly with extensive code sections containing multiple languages.

React’s rendering lifecycle phases—mounting, updating, and unmounting—each introduce performance considerations when handling large Markdown content. The initial mount of large Markdown document requires creation of thousands of DOM nodes, which can lead to prolonged first contentful paint times. Subsequent updates that affect any part of the Markdown content trigger re-renders that may unnecessarily process unchanged sections, wasting valuable computational resources.

Browser Performance Limitations

Browser engines impose inherent limitations on processing large amounts of content, regardless of library or framework in use. Chrome’s V8 engine, Safari’s JavaScriptCore, Firefox’s SpiderMonkey each have different performance characteristics and optimizations. These engines must parse, compile, execute JavaScript code that handles Markdown processing, all while managing memory allocation and garbage collection—processes that become increasingly resource-intensive with larger files.

Layout thrashing represents another browser-specific performance concern when rendering large Markdown files. When React Markdown outputs thousands of DOM elements, browser must calculate layout information for each element, potentially causing multiple reflows if styles depend on element dimensions. Complex Markdown documents with tables, images, or embedded components exacerbate this issue, as their dimensions often depend on content that itself requires layout calculations.

Browser memory limits also play critical role in handling large Markdown files. Desktop browsers generally handle 2-4GB of content reasonably well, but mobile browsers often impose stricter limitations. Large Markdown files with extensive code blocks, tables, embedded media can quickly approach these limits, causing crashes or degraded performance on mobile devices—particularly problematic given increasing importance of mobile responsiveness in web applications.

Optimizing React Markdown for Large Files

Memory Management Techniques

Efficient memory handling becomes paramount when working with large Markdown files in React applications. Implementing proper cleanup functions in React useEffect hooks prevents memory leaks by releasing resources when components unmount. Developers should cancel pending parsing operations and clean up any subscriptions or event listeners to prevent orphaned processes that continue consuming memory after component destruction.

Memory fragmentation can significantly impact performance when processing large Markdown files repeatedly. Implementing object pooling strategies for frequently created objects during Markdown parsing reduces garbage collection pressure. This technique involves reusing objects instead of repeatedly creating and destroying them, which minimizes memory churn and allocation overhead during processing of large documents.

Web Workers provide effective solution for offloading Markdown processing from main thread. By moving parsing operations to separate thread, developers can prevent UI freezing during processing of large files. The main thread remains responsive to user interactions while Worker thread handles computationally intensive Markdown parsing, resulting in smoother user experience even with substantial content volumes.

Lazy Loading Implementation

Chunking large Markdown files into smaller, manageable sections represents one of most effective optimization strategies. Instead of loading entire 500-page document at once, developers can split content into logical sections that load progressively as users navigate through document. This approach reduces initial load times and memory consumption while providing users with content they need immediately.

- Implement dynamic imports for Markdown sections to code-split application, reducing initial bundle size

- Use Intersection Observer API to detect when users approach section boundaries, triggering preloading of adjacent content

- Create lightweight table of contents component that provides navigation to different sections without requiring full document to load

- Implement compression techniques like gzip for Markdown content to reduce network payload size

- Consider server-side rendering for initial Markdown content to improve perceived performance

Pagination offers another viable strategy for handling large Markdown files. Breaking content into discrete pages allows users to digest information systematically while preventing browser overload. Each page contains reasonable portion of total Markdown content, ensuring that only fraction of total document exists in DOM at any given time. This approach proves particularly effective for documentation sites and educational content.

Progressive rendering techniques enable displaying Markdown content incrementally as it becomes available. Instead of waiting for entire document to parse and render, application can display content sections immediately upon processing. This approach improves perceived performance by providing users with visual feedback during loading process, maintaining engagement even with large files that require substantial processing time.

Component Architecture Optimization

Memoization through React.memo and useMemo prevents unnecessary re-renders of parsed Markdown components. By selectively memoizing expensive rendering operations, developers can ensure that only changed Markdown sections update during state changes rather than re-rendering entire document. This optimization significantly improves performance when large Markdown files exist within complex React components with frequent state updates.

Virtual DOM optimization plays crucial role in efficiently rendering large Markdown content. Developers should implement keys consistently and meaningfully to help React identify which elements have changed between renders. Avoiding inline functions and objects as props to Markdown components prevents unnecessary re-creation on each render, which would trigger re-evaluation of entire Markdown tree.

Breaking large Markdown files into component hierarchies enables more granular re-rendering. By parsing specific sections as separate React components, developers can isolate updates to smaller portions of document rather than requiring reprocessing of entire content. This approach proves especially valuable when certain sections of Markdown document contain dynamic elements that require frequent updates while remainder remains static.

Alternative Solutions for Large Markdown Files

Virtual Scrolling Implementation

Virtual scrolling represents one of most effective techniques for handling large Markdown files in React applications. By rendering only visible elements and strategically buffering small viewport margin, virtual scrolling techniques dramatically reduce number of DOM nodes required to display large documents. This approach makes it possible to display content with thousands of elements efficiently, maintaining performance regardless of total file size.

React-window and react-virtualized offer specialized virtual scrolling implementations optimized for various use cases. These libraries track scroll position and calculate which elements should appear in viewport, dynamically creating and destroying DOM nodes as users navigate through content. When combined with React Markdown, these solutions can transform how large documents render, providing smooth scrolling experiences even with files containing tens of thousands of lines.

Implementing virtual scrolling with Markdown content requires careful consideration of element height calculations. While most Markdown elements have predictable heights, code blocks, tables, embedded media can introduce variable heights that complicate virtual scrolling calculations. Developers must implement dynamic height measurement techniques or employ hybrid virtualization strategies that handle uniform and variable height elements differently to ensure smooth scrolling behavior.

Server-Side Rendering Options

Next.js provides excellent server-side rendering capabilities for Markdown content through its getStaticProps and getServerSideProps functions. By processing Markdown on server, developers can offload parsing operations from client devices, reducing computational burden on browsers. This approach delivers pre-rendered HTML content that requires minimal client-side processing, significantly improving initial load times for large Markdown files.

- Implement incremental static regeneration for large Markdown files that receive frequent updates

- Use Next.js image optimization for embedded media in Markdown content to reduce payload size

- Consider edge computing solutions like Vercel Edge Functions to process Markdown closer to users

- Implement aggressive caching strategies for pre-rendered Markdown content to reduce server load

- Create specialized API endpoints that return pre-processed Markdown sections for large documents

Static site generation represents another powerful approach for handling large Markdown content. Tools like Next.js, Gatsby, Hugo can pre-process Markdown files at build time, generating static HTML pages that serve content instantly without runtime processing. This strategy eliminates client-side parsing overhead entirely, making it possible to serve extremely large documents with minimal performance impact.

Edge computing solutions like Cloudflare Workers and Vercel Edge Functions enable Markdown processing closer to users geographically. By distributing parsing operations across edge locations, these solutions reduce latency and improve perceived performance for large Markdown files. Additionally, edge caching strategies can store pre-rendered Markdown content, further reducing processing requirements for frequently accessed documents.

Custom Markdown Processors

Building custom Markdown processors allows developers to optimize specifically for their content characteristics. General-purpose Markdown libraries must account for numerous edge cases and formatting options that might not be necessary for specific applications. By creating tailored processors that handle only required Markdown syntax, developers can significantly improve parsing efficiency for large files.

Incremental parsing techniques enable processing large Markdown files in stages rather than all at once. Custom processors can parse document structure initially, then process content sections on-demand as users navigate through document. This approach reduces initial processing time and memory consumption while still providing access to complete document content.

WebAssembly implementations of Markdown parsers offer substantial performance improvements over JavaScript-based solutions. By leveraging languages like Rust or Go compiled to WebAssembly, developers can create highly efficient Markdown processors that significantly outperform traditional JavaScript parsers. These implementations prove particularly valuable for extremely large files where every millisecond of processing time impacts user experience.

Real-World Performance Testing and Benchmarks

Testing Large File Scenarios

Performance testing with various Markdown file sizes reveals critical insights into React Markdown’s capabilities. Testing with files ranging from 1KB to 10MB demonstrates linear degradation in parsing and rendering performance up to approximately 500KB, after which performance drops more dramatically. Code-heavy Markdown files with extensive syntax highlighting show 20-30% longer parsing times compared to text-heavy files of similar size, highlighting computational overhead of code highlighting.

Browser-specific performance variations become apparent when testing across different platforms. Chrome typically outperforms Firefox and Safari in Markdown parsing speeds by approximately 15-25%, particularly with files exceeding 1MB. Mobile browsers generally process Markdown files 40-60% slower than their desktop counterparts, emphasizing need for mobile-specific optimizations when supporting large Markdown content on mobile devices.

Testing reveals that file complexity significantly impacts performance beyond just raw file size. Files with deeply nested lists, numerous tables, extensive internal links require 30-50% more processing time than flat content files of equivalent length. This complexity factor must be considered when evaluating React Markdown’s suitability for specific content types, as documentation with complex structures may struggle even at moderate file sizes.

Performance Measurement Tools

Chrome DevTools Performance profiler provides detailed insights into Markdown processing bottlenecks. Recording interactions while loading large Markdown files reveals parsing duration, rendering time, scripting bottlenecks. Developers should specifically look for long tasks that exceed 50ms, as these directly impact perceived performance. The Memory profiler helps identify memory leaks and excessive allocation during Markdown processing.

React DevTools Profiler offers specialized metrics for React component rendering performance. When working with large Markdown files, this tool helps identify which components re-render unnecessarily and how long each Markdown section takes to mount. The Profiler’s flame graph visualization makes it easy to spot expensive rendering operations that might benefit from memoization or restructuring.

- Set up automated performance testing in CI/CD pipeline to catch regressions before production

- Create comprehensive performance budgets specifically for Markdown processing operations

- Implement real user monitoring (RUM) to capture actual performance metrics across diverse user environments

- Utilize Lighthouse CI to automate performance scoring for pages containing large Markdown content

- Establish baseline performance metrics for different Markdown file sizes to identify degradation

Lighthouse provides automated performance audits specifically focused on user experience metrics. When evaluating React Markdown implementations, developers should pay special attention to Time to Interactive, First Contentful Paint, Cumulative Layout Shift scores. Large Markdown files that block main thread or cause significant layout shifts will negatively impact these scores, potentially affecting search rankings and user satisfaction.

Benchmarking Against Alternatives

Comparative testing between React Markdown and alternative solutions reveals performance tradeoffs. React-markdown typically processes files 2-3x faster than react-markdown-it2 but offers fewer customization options. Specialized solutions like MDX provide enhanced functionality for interactive components but show 40-60% longer processing times for large files compared to standard React Markdown implementations.

Native JavaScript markdown-it library, when used directly in React without React Markdown wrapper, shows 25-35% performance improvement for large files. This improvement stems from eliminating additional React component wrapping overhead, though it requires more manual implementation for proper integration with React’s rendering lifecycle.

Alternative markup languages sometimes offer better performance characteristics. RestructuredText and AsciiDoc, when processed through specialized parsers, show comparable performance to Markdown for small files but maintain better performance scaling for very large files. However, these formats require additional implementation considerations and may not suit all use cases, particularly when content authors are accustomed to Markdown’s simplicity.

Best Practices for Large Markdown Implementation

Content Organization Strategies

Logical structuring of large Markdown files significantly impacts usability and performance. Breaking comprehensive documentation into topic-specific files rather than maintaining monolithic documents allows more granular loading and reduces initial parsing overhead. Each file should focus on specific topic or feature, enabling users to load only relevant content while maintaining clear navigational structures through index files and cross-references.

Implementing consistent heading hierarchies enables automated table of contents generation and facilitates progressive loading strategies. Well-structured headings allow parsing tools to identify content sections accurately, enabling techniques like partial rendering that load visible content first while deferring processing of less critical sections. This approach maintains document integrity while improving perceived performance.

- Limit individual Markdown files to 100KB whenever possible for optimal parsing performance

- Implement content chunking based on logical sections rather than arbitrary byte limits

- Use consistent heading hierarchies to enable automated navigation and progressive loading

- Separate code examples into dedicated files referenced from main documentation to reduce parsing overhead

- Implement automated content size monitoring to identify files exceeding optimal thresholds

Modular documentation architectures enable more efficient content delivery systems. By creating system of interlinked Markdown files rather than comprehensive documents, developers can implement sophisticated loading strategies that prefetch content based on user navigation patterns. This approach proves particularly effective for complex technical documentation where users typically follow specific reading paths through interconnected topics.

Performance Monitoring Implementation

Real User Monitoring (RUM) provides valuable insights into actual Markdown processing performance across diverse user environments. Implementing performance tracking that measures parsing duration, rendering time, interaction latencies helps identify performance regressions before they impact user satisfaction. These metrics should correlate with specific content characteristics to identify which document types or sizes cause performance issues.

Error boundary implementations around React Markdown components prevent complete application failure when processing problematic Markdown files. These boundaries should log detailed error information including file size, processing stage, browser environment to help diagnose performance issues. Graceful fallback displays maintain usability even when specific documents fail to process correctly.

Performance budgets establish concrete limits on acceptable Markdown processing impact on application metrics. Defining maximum parsing durations and rendering times ensures that new content additions don’t degrade application performance. Automated testing during content updates should verify that Markdown files remain within established performance budgets, with alerts triggered when limits are exceeded.

Development Workflow Optimization

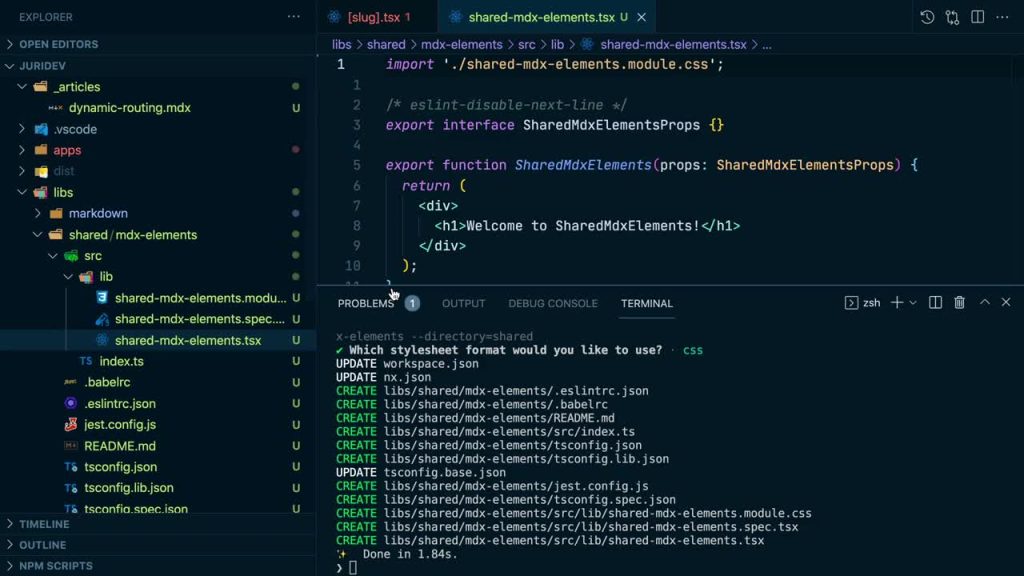

Previewing large Markdown files during development requires specialized tooling to maintain productivity. Implementing hot reloading that processes only changed sections rather than entire documents significantly reduces iteration time during documentation updates. Development servers should implement specialized preview modes that load content progressively rather than waiting for complete processing before displaying previews.

Markdown content validation tools help identify performance-impacting elements before they reach production. Automated linting tools can flag problematic patterns such as excessively long code blocks, deeply nested structures, inefficient table implementations. These validations integrate into content review processes to ensure that new additions maintain established performance standards.

Performance regression testing for Markdown content should occur during content updates rather than just code deployments. Implementing automated benchmarks that process sample Markdown files during CI/CD pipelines helps catch performance degradations early. These tests should run across multiple browser environments to ensure consistent performance characteristics regardless of user’s platform.

Conclusion

React Markdown can handle large Markdown files but requires careful implementation and optimization strategies. Developers must balance content requirements with performance considerations, implementing appropriate techniques based on file sizes and complexity. Memory management, lazy loading, virtualization, and alternative processing approaches offer viable paths to successfully incorporating large Markdown content in React applications while maintaining excellent user experiences.